This project is immature and under active development. Contents will be updated rapidly

Portable deep learning models with Causality

Causality is a free and open source javascript library that allows building isomorphic machine learning pipeline. Roundly speaking, your trained model can be deployed on client's devices via web environment without re-piping your code.

On top of Tensorflowjs, our set of reusable components handle data preprocessing, infer data representation, visualizing, training and evaluation on both node and web environment with the same APIs. Thus reduce engineering efforts for making production AI services. By using the same language, developers can simplify development setup, mitigate the communication cost, better coding pattern and share more ideas.

Moreover, with AI models are loaded as client' devices for performing inference, personal or sensitive data is not exposed to the service providers. We also invest in ensemble learning and the recent federated learning approach for distributed training while preserving data privacy without requiring any global data storage.

Researchers can utilize built-in datasets and the prebuilt pipelines to prototype new model ideas and make research results easy to review, present and reproduce. We hope developers and researchers can find this project a meaningful work to contribute and collaborate to push forward a new class of affordable, transparent deep learning services.

The commercial version of this library, Moderator, is our effort for moderating social network contents heading to protecting community culture. The AI moderator, which is built up by community voted training data, transparently prevent bad contents from propagating, and re-ranking relevant contents prior to client views without revealing any personal preference. The Causality, Moderator alongside with React Social Network are the ideas from our startup, Red Gold, for building a smarter social network with community culture respect and transparent AI moderator.

For example, we can build a simple Logistic regression model with dummy dataset

import { causalNetSGDOptimizer } from 'causal-net.optimizers';

import { causalNetModels } from 'causal-net.models';

import { causalNetParameters, causalNetLayers } from 'causal-net.layer';

import { causalNet } from 'causal-net';

import { termLogger } from 'causal-net.log';

(async ()=>{

const DummyData = (batchSize)=>{

let samples = [ [0,1,2,3],

[0,1,2,3],

[0,1,2,3] ];

let labels = [ [1,0],

[1,0],

[1,0] ];

return [{samples, labels}];

};

let emitCounter = 0;

const PipeLineConfigure = {

Dataset: {

TrainDataGenerator: DummyData,

TestDataGenerator: DummyData

},

Net: {

Parameters: causalNetParameters.InitParameters(),

Layers: {

Predict: [ causalNetLayers.dense({ inputSize: 4, outputSize: 3, activator: 'sigmoid' }),

causalNetLayers.dense({ inputSize: 3, outputSize: 2, activator: 'sigmoid' })]

},

Model: causalNetModels.classification(2),

Optimizer: causalNetSGDOptimizer.adam({learningRate: 0.01})

},

Deployment: {

Emitter: async ()=>{

return new Promise((resolve, reject)=>{

setTimeout(()=>{

let data = (emitCounter < 3)?{Predict: [0,1,2,3]}:null;

emitCounter += 1;

termLogger.log({ emitter: data});

resolve(data);

}, 1000);

});

},

Listener: async (infer)=>{

termLogger.log({ Listener: infer});

}

}

};

causalNet.setByConfig(PipeLineConfigure);

const numEpochs=10, batchSize=3;

let loss = await causalNet.train(numEpochs, batchSize);

let plotId = termLogger.plot({ type:'line', data: loss,

xLabel: '# of iter',

yLabel: 'loss'});

await termLogger.show({plotId});

termLogger.log(await causalNet.test());

let deployResult = await causalNet.deploy();

termLogger.log({deployResult});

})();

Introduction

Key design principles:

- All components are isomorphic.

- self-explaning.

We not use type script because we try to mitigate early technical debt from unpaid type tax.

Pipeline

Causality attempts to standardize the pipeline into those steps:

- Sampling from raw data.

- Preprocessing data.

- Infering representation of data.

- Training/ensemble training.

- Evaluation/ensemble evaluation.

For example, we can build a simple Logistic regression model with dummy dataset

import { causalNetSGDOptimizer } from 'causal-net.optimizers';

import { causalNetModels } from 'causal-net.models';

import { causalNetParameters, causalNetLayers } from 'causal-net.layer';

import { causalNet } from 'causal-net';

import { termLogger } from 'causal-net.log';

(async ()=>{

const DummyData = (batchSize)=>{

let samples = [ [0,1,2,3],

[0,1,2,3],

[0,1,2,3] ];

let labels = [ [1,0],

[1,0],

[1,0] ];

return [{samples, labels}];

};

let emitCounter = 0;

const PipeLineConfigure = {

Dataset: {

TrainDataGenerator: DummyData,

TestDataGenerator: DummyData

},

Net: {

Parameters: causalNetParameters.InitParameters(),

Layers: {

Predict: [ causalNetLayers.dense({ inputSize: 4, outputSize: 3, activator: 'sigmoid' }),

causalNetLayers.dense({ inputSize: 3, outputSize: 2, activator: 'sigmoid' })]

},

Model: causalNetModels.classification(2),

Optimizer: causalNetSGDOptimizer.adam({learningRate: 0.01})

},

Deployment: {

Emitter: async ()=>{

return new Promise((resolve, reject)=>{

setTimeout(()=>{

let data = (emitCounter < 3)?{Predict: [0,1,2,3]}:null;

emitCounter += 1;

termLogger.log({ emitter: data});

resolve(data);

}, 1000);

});

},

Listener: async (infer)=>{

termLogger.log({ Listener: infer});

}

}

};

causalNet.setByConfig(PipeLineConfigure);

const numEpochs=10, batchSize=3;

let loss = await causalNet.train(numEpochs, batchSize);

let plotId = termLogger.plot({ type:'line', data: loss,

xLabel: '# of iter',

yLabel: 'loss'});

await termLogger.show({plotId});

termLogger.log(await causalNet.test());

let deployResult = await causalNet.deploy();

termLogger.log({deployResult});

})();

and the ensemble version

import { causalNetSGDOptimizer } from 'causal-net.optimizers';

import { causalNetModels } from 'causal-net.models';

import { causalNetParameters, causalNetLayers } from 'causal-net.layer';

import { causalNet } from 'causal-net';

import { termLogger } from 'causal-net.log';

(async ()=>{

const DummyData = (batchSize)=>{

let samples = [ [0,1,2,3],

[0,1,2,3],

[0,1,2,3] ];

let labels = [ [1,0],

[1,0],

[1,0] ];

return [{samples, labels}];

};

let emitCounter = 0;

const PipeLineConfigure = {

Dataset: {

TrainDataGenerator: DummyData,

TestDataGenerator: DummyData

},

Net: {

Parameters: causalNetParameters.InitParameters(),

Layers: {

Predict: [ causalNetLayers.dense({ inputSize: 4, outputSize: 3, activator: 'sigmoid' }),

causalNetLayers.dense({ inputSize: 3, outputSize: 2, activator: 'sigmoid' })]

},

Model: causalNetModels.classification(2),

Optimizer: causalNetSGDOptimizer.adam({learningRate: 0.01})

},

Deployment: {

Emitter: async ()=>{

return new Promise((resolve, reject)=>{

setTimeout(()=>{

let data = (emitCounter < 3)?{Predict: [0,1,2,3]}:null;

emitCounter += 1;

termLogger.log({ emitter: data});

resolve(data);

}, 1000);

});

},

Listener: async (infer)=>{

termLogger.log({ Listener: infer});

}

}

};

causalNet.setByConfig(PipeLineConfigure);

let models = ['Model1', 'Model2', 'Model3'];

let losses = {};

const numEpochs=10, batchSize=3;

for(let model of models){

let result = await causalNet.ensembleTrain(numEpochs, batchSize, model);

losses = {...losses, ...result};

}

let plotId = termLogger.plot({ type:'line', data: losses,

xLabel: '# of iter',

yLabel: 'loss'});

await termLogger.show({plotId});

termLogger.log(await causalNet.test());

let deployResult = await causalNet.deploy();

termLogger.log({deployResult});

})();

Monorepo

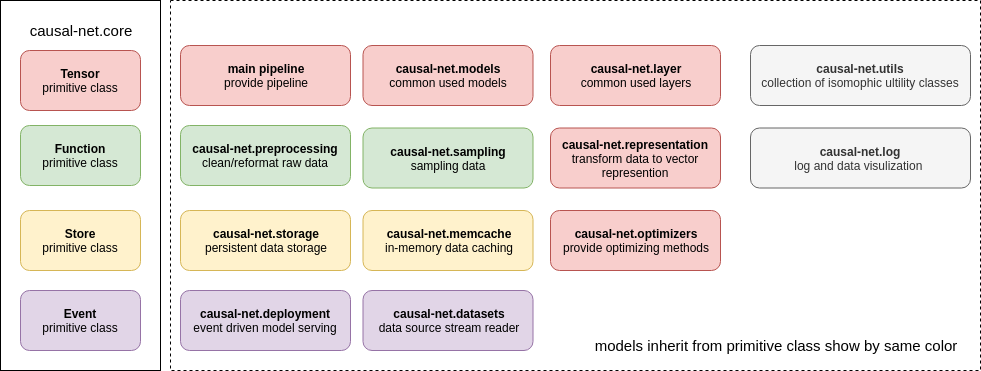

Causality provides sub-package plugins for build up pipeline as follows:

Causality intensively uses mixin for composing class. Mixins allow constructing elastic class that imports just enough methods for target usages. We try to mitigate redundant methods and reduce bundle size. The main mixins for building a pipeline class can be found at the /src/ folder which pre-built CausalNet pipeline ready to use (check tutorials session). Advance mixins

are seperated into different sub-packages under the /packages/ folder. Each sub-package exports at most one mixin for building pipeline, For example, causality-optimizer provide trainerMixins for optimizing parameters.

Project module view

causal-net.core

This package provides:

causalNetCore

Allow acess to core functor and core tensor instance.

import { causalNetCore } from 'causal-net.core';

console.log(causalNetCore.CoreTensor);

console.log(causalNetCore.CoreFunctor);

Tensor

Primitive class for composing Tensor based class. This class is based on tensorflowjs

import { Tensor, causalNetCore } from 'causal-net.core';

let tensor = new Tensor();

let T = causalNetCore.CoreTensor;

let ta = T.tensor([1, 2]);

console.log(tensor.isTensor(ta));

console.log(tensor.isTensor([1,2,3]));

Functor

Primitive class for composing Functor based class. This class is based on Ramda

import { Functor } from 'causal-net.core';

(async ()=>{

let functor = new Functor();

console.log(functor.range(10));

console.log(functor.zeros(10));

console.log(functor.ones(10));

})();

Store

Primivtive class for composing Store base class. This class is based on levelup

Event

Primivtive class for composing Event base class. This class is extended from EventEmitter

import { Event } from 'causal-net.core';

(async ()=>{

let eventA = new Event();

let eventB = new Event();

eventA.on('data', (data)=>{

console.log({'event handler': data});

return 'this is done';

})

console.log(await eventA.emit('data', [1,2,3]));

console.log('send event');

eventB.pipe(eventA);

console.log(await eventB.emit('data', ['1,2,3']));

})();

causal-net.datasets

This package provides:

CausalNetDataSource

This class is a standard implementation for pipeline Source which can be accessed via causalNetDataSource instance.

import { causalNetDataSource } from 'causal-net.datasets';

(async ()=>{

let description = await causalNetDataSource.connect('../../datasets/MNIST_dataset_NoSplit/');

console.log( description );

console.log( causalNetDataSource.SampleSize );

console.log( causalNetDataSource.chunkSelect(1) );

const SampleReader = causalNetDataSource.SampleReader;

const LabelReader = causalNetDataSource.LabelReader;

for(let { Sample, Label, ChunkName } of causalNetDataSource.chunkSelect(1) ){

let sampleData = await SampleReader(Sample);

let labelData = await LabelReader(Label);

console.log({ ChunkName,

[Sample]: sampleData.length,

[Label]: labelData.length });

}

let readreport = await causalNetDataSource.read();

console.log({ readreport });

})().catch(console.error);

DatasetMixins

This mixin class provides attibutea: DataSourceReader , methods: reading and handle Source setting in pipelineConfig

import { causalNetDataSource, DataSourceMixins } from 'causal-net.datasets';

import { PreprocessingMixins,

causalNetPreprocessingStream } from 'causal-net.preprocessing';

import { causalNetCore, Functor as BaseFunctor } from 'causal-net.core';

import { termLogger, LoggerMixins } from 'causal-net.log';

import { platform } from 'causal-net.utils';

const R = causalNetCore.CoreFunctor;

const sampleTransformer = (chunkSamples) => {

console.log({chunkSamples: chunkSamples.length});

return chunkSamples;

};

const labelTransformer = (chunkLabels) => {

console.log({chunkLabel: chunkLabels.length});

return chunkLabels;

}

const PipeLineConfigure = {

Dataset: {

Source: causalNetDataSource,

Preprocessing: {

SampleTransformer: sampleTransformer,

LabelTransformer: labelTransformer

}

}

};

class SimpleDataset extends platform.mixWith(BaseFunctor,

[ PreprocessingMixins,

DataSourceMixins,

LoggerMixins ]){

constructor( preprocessing, logger ){

super();

this.Preprocessing = preprocessing;

this.Logger = logger;

}

}

(async ()=>{

await causalNetDataSource.connect('../../datasets/MNIST_dataset_NoSplit/');

let dataset = new SimpleDataset( causalNetPreprocessingStream, termLogger );

dataset.setByConfig(PipeLineConfigure);

dataset.DataSourceReader.chunkSelect(1);

console.log( await dataset.read() );

})().catch(console.error);

causal-net.deployment

This package provides:

causalNetDeployment

The implementation for event-based model deployment which is supplied to pipeline class instance as Deployment attribute. Pipeline class must be mixed with DeploymentMixins.

import { causalNetDeployment } from 'causal-net.deployment';

(async ()=>{

var emitCounter = 0;

causalNetDeployment.Emitter = async ()=>{

return new Promise((resolve, reject)=>{

setTimeout(()=>{

let data = (emitCounter < 3)?{Predict: [0,1,2,3]}:null;

emitCounter += 1;

console.log({ emitter: data});

resolve(data);

}, 1000);

});

};

causalNetDeployment.Listener = async (data)=>{

console.log({listener: data});

};

causalNetDeployment.Inferencer = (data)=>{

console.log({'inferencer': data});

return data;

};

console.log(await causalNetDeployment.deploy());

})().catch(console.error);

DeploymentMixins

This mixin class provides attributes: Deployment, Inferencer, and handle Deployment setting of pipelineConfig.

import { causalNetSGDOptimizer, TrainerMixins, EvaluatorMixins } from 'causal-net.optimizers';

import { causalNetModels, ModelMixins } from 'causal-net.models';

import { causalNetParameters, causalNetLayers, causalNetRunner, LayerRunnerMixins } from 'causal-net.layer';

import { causalNetCore, Functor, Tensor } from 'causal-net.core';

import { platform } from 'causal-net.utils';

import { causalNetDeployment, DeploymentMixins } from 'causal-net.deployment';

import { termLogger, LoggerMixins } from 'causal-net.log';

class SimplePipeline extends platform.mixWith(Tensor, [

LayerRunnerMixins,

ModelMixins,

EvaluatorMixins,

TrainerMixins,

LoggerMixins,

DeploymentMixins ]){

constructor( netRunner, functor, logger, deployment){

super();

this.F = functor;

this.LayerRunner = netRunner;

this.Logger = logger;

this.Deployment = deployment;

}

}

const T = causalNetCore.CoreTensor;

const F = new Functor();

const DummyData = (batchSize)=>{

let samples = [ [0,1,2,3],

[0,1,2,3],

[0,1,2,3] ];

let labels = [ [1,0],

[1,0],

[1,0] ];

return [{samples, labels}];

}

console.log(F.range(10));

console.log(F.enumerate([0,1,2,3,4]));

console.log(DummyData(1));

(async ()=>{

let emitCounter = 0;

const PipeLineConfigure = {

Dataset: {

TrainDataGenerator: DummyData,

TestDataGenerator: DummyData

},

Net: {

Parameters: causalNetParameters.InitParameters(),

Layers: {

Predict: [ causalNetLayers.dense(4, 3),

causalNetLayers.dense(3, 2)]

},

Model: causalNetModels.classification(2),

Optimizer: causalNetSGDOptimizer.adam({learningRate: 0.01})

},

Deployment: {

Emitter: async ()=>{

return new Promise((resolve, reject)=>{

setTimeout(()=>{

let data = (emitCounter < 3)?{Predict: [0,1,2,3]}:null;

emitCounter += 1;

console.log({ emitter: data});

resolve(data);

}, 1000);

});

},

Listener: async (infer)=>{

console.log({ Listener: infer});

}

}

};

let pipeline = new SimplePipeline( causalNetRunner, F, termLogger, causalNetDeployment);

pipeline.setByConfig(PipeLineConfigure);

let predictInfer = pipeline.PredictModel( T.tensor([[1,2,3,4]]) );

predictInfer.print();

pipeline.deploy().then(res=>console.log(res));

console.log(await pipeline.train(100, 1));

})().catch(err=>{

console.error({err});

});

causal-net.layer

This module provides:

CausalNetLayers

This class provides common used layers which can be accessed via causalNetLayers instance.

import { causalNetLayers } from 'causal-net.layer';

let denseLayer = causalNetLayers.dense({inputSize:3,outputSize:2});

console.log({denseLayer: denseLayer.Config});

CausalNetParameters

This class is a standard implementation for model parameters which can be accessed via causalNetParameters instance

import { causalNetParameters } from 'causal-net.layer';

import { causalNetLayers } from 'causal-net.layer';

(async ()=>{

const Layers = {

Predict: [ causalNetLayers.dense(4, 3),

causalNetLayers.dense(3, 2)],

Encode: [ causalNetLayers.dense(4, 2) ],

Decode: [ causalNetLayers.dense(4, 2) ]

};

const Parameters = {};

console.log(causalNetParameters.InitParameters(Parameters)(Layers));

console.log(await causalNetParameters.exportParameters());

console.log(await causalNetParameters.saveParams('save0'));

console.log(await causalNetParameters.getSavedParamList());

console.log(await causalNetParameters.loadParams('save0'));

})();

CausalNetRunner

This CausalNetRunner class provide a standard net excecutor which is provided pipeline instance at LayerRunner attribute.

import { causalNetParameters, causalNetLayers, causalNetRunner } from 'causal-net.layer';

import { causalNetCore } from 'causal-net.core';

(async ()=>{

const T = causalNetCore.CoreTensor;

const Net = {

Parameters: { Predict: null, Encode: null, Decode: null },

Layers: {

Predict: [ causalNetLayers.dense(4, 3),

causalNetLayers.dense(3, 2)],

Encode: [ causalNetLayers.dense(4, 2) ],

Decode: [ causalNetLayers.dense(4, 2) ]

}

};

console.log(causalNetParameters.setOrInitParams(Net.Layers, Net.Parameters));

causalNetRunner.NetLayers = Net.Layers;

causalNetRunner.NetParameters = causalNetParameters;

let predictLayer = causalNetRunner.run(Net.Layers.Predict, T.tensor([[1,2,3,4]]),

causalNetParameters.PredictParameters);

predictLayer.print();

const PredictRunner = causalNetRunner.Predictor;

console.log(PredictRunner);

predictLayer = PredictRunner(T.tensor([[1,2,3,4]]));

predictLayer.print();

let encodeLayer = causalNetRunner.run(Net.Layers.Encode, T.tensor([[1,2,3,4]]),

causalNetParameters.EncodeParameters);

encodeLayer.print();

const EncodeRunner = causalNetRunner.Encoder;

encodeLayer = EncodeRunner( T.tensor([[1,2,3,4]]) );

encodeLayer.print();

let decodeLayer = causalNetRunner.run(Net.Layers.Decode, T.tensor([[1,2,3,4]]),

causalNetParameters.DecodeParameters);

decodeLayer.print();

const DecodeRunner = causalNetRunner.Decoder;

decodeLayer = DecodeRunner( T.tensor([[1,2,3,4]]) );

decodeLayer.print();

})();

LayerRunnerMixins

This mixin class provide attributes: ParameterInitializer, LayerRunner, and handle Net setting of pipelineConfig.

import { causalNetParameters, causalNetLayers, causalNetRunner, LayerRunnerMixins } from 'causal-net.layer';

import { causalNetCore } from 'causal-net.core';

import { platform } from 'causal-net.utils';

import { Tensor } from 'causal-net.core';

import { termLogger } from 'causal-net.log';

const PipeLineConfigure = {

Net: {

Parameters: causalNetParameters.InitParameters(),

Layers: {

Predict: [ causalNetLayers.dense({inputSize:4,outputSize:2}) ],

Encode: [ causalNetLayers.dense({inputSize:4,outputSize:2}) ],

Decode: [ causalNetLayers.dense({inputSize:4,outputSize:2}) ]

}

}

}

class SimplePipeline extends platform.mixWith(Tensor, [ LayerRunnerMixins ]){

constructor(layerRunner, logger){

super();

this.logger = logger;

this.LayerRunner = layerRunner;

}

}

const T = causalNetCore.CoreTensor;

(async ()=>{

let pipeline = new SimplePipeline(causalNetRunner, termLogger);

pipeline.setByConfig(PipeLineConfigure);

const { Predictor, Encoder, Decoder } = pipeline.LayerRunner;

console.log({ Predictor, Encoder, Decoder });

})().catch(err=>{

console.error({err});

});

causal-net.log

This module provides:

TermLogger

This class is isomomorphic logger which can be accessed via termLogger.

import { termLogger } from 'causal-net.log';

termLogger.log('this is text');

termLogger.log({'name':'this is text'});

termLogger.log({'father':{'name':'this is text','alias':'this is another text'}});

termLogger.log({'father':{'name':{sub:'this is text'},'alias':'this is another text'}});

termLogger.log({'array':[0,1,2,3,4]});

termLogger.log({'array':[{a:0}, {b:1}, {c:2}, {d:4}, {e:6}]});

termLogger.Level = 'debug';

console.log(termLogger.Level);

termLogger.log({'not to show': true});

termLogger.Level = 'log';

console.log(termLogger.Level);

termLogger.progressBegin(5);

for(let i of [1,2,3,4,5]){

termLogger.progressUpdate({current: i});

}

termLogger.progressEnd();

termLogger.groupBegin('group A');

termLogger.groupBegin('group B');

termLogger.groupBegin('group C');

termLogger.groupEnd();

termLogger.groupEnd();

termLogger.groupEnd();

Using builtin plot (vivid)

import { termLogger, vivid } from 'causal-net.log';

(async ()=>{

termLogger.connect();

termLogger.groupBegin('this is log');

termLogger.log('this is log');

let plotData = {

type: 'scatter',

data: {

'X': [[0,0],[1,0],[0,1]],

'Y': [[-1,-1],[-1,0],[0,-1]],

},

'xRange': [-2,2],

'yRange': [-2,2],

'xLabel': 'may be x',

'yLabel': 'y unit',

'title': 'test',

style: { "body": {"font": "11px"} } };

let plotId = termLogger.plot(plotData);

await termLogger.show({plotId});

const makeImageData = (offset, width=28, height=28)=>{

let imageData = [];

for (var x=0; x<width; x++) {

for (var y=0; y<height; y++) {

var pixelindex = (y * width + x) * 4;

// Generate a xor pattern with some random noise

var red = ((x+offset) % 256) ^ ((y+offset) % 256);

var green = ((2*x+offset) % 256) ^ ((2*y+offset) % 256);

var blue = 50 + Math.floor(Math.random()*100);

// Rotate the colors

blue = (blue + offset) % 256;

// Set the pixel data

imageData[pixelindex] = red; // Red

imageData[pixelindex+1] = green; // Green

imageData[pixelindex+2] = blue; // Blue

imageData[pixelindex+3] = 255; // Alpha

}

}

return imageData;

};

let data = makeImageData(0);

plotId = termLogger.plot({type: 'png', data, width:28, height:28, title:'test2'});

await termLogger.show({plotId});

plotData = {

type: 'line',

data: {

'X': [1,2,4,6],

'y': [3,4,5,6]

},

'xRange': [-2,2],

'yRange': [-2,2],

'xLabel': 'x unit',

'yLabel': 'y unit',

'title': 'test3',

style: { "body": {"font": "11px"} } };

plotId = termLogger.plot(plotData);

await termLogger.show({plotId});

termLogger.groupEnd('this is log');

})();

Vivid

This class is provide common used plots which can be accessed via vivid.

Line chart

import { vivid } from 'causal-net.log';

(async ()=>{

let plotData = {

type: 'line',

data: {

'X': [1,2,4,6],

'y': [3,4,5,6]

},

'xRange': [-2,2],

'yRange': [-2,2],

'xLabel': 'x unit',

'yLabel': 'y unit',

'title': 'test3',

style: { "body": {"font": "11px"} } };

let plotId = vivid.line(plotData);

await vivid.show({plotId});

termLogger.groupEnd('this is log');

})();

Scatter chart

import { vivid } from 'causal-net.log';

(async ()=>{

let plotData = {

type: 'scatter',

data: {

'X': [[0,0],[1,0],[0,1]],

'Y': [[-1,-1],[-1,0],[0,-1]],

},

'xRange': [-2,2],

'yRange': [-2,2],

'xLabel': 'may be x',

'yLabel': 'y unit',

'title': 'test',

style: { "body": {"font": "11px"} } };

let plotId = vivid.scatter(plotData);

await vivid.show({plotId});

})();

PNG

import { vivid } from 'causal-net.log';

(async ()=>{

const makeImageData = (offset, width=28, height=28)=>{

let imageData = [];

for (var x=0; x<width; x++) {

for (var y=0; y<height; y++) {

var pixelindex = (y * width + x) * 4;

// Generate a xor pattern with some random noise

var red = ((x+offset) % 256) ^ ((y+offset) % 256);

var green = ((2*x+offset) % 256) ^ ((2*y+offset) % 256);

var blue = 50 + Math.floor(Math.random()*100);

// Rotate the colors

blue = (blue + offset) % 256;

// Set the pixel data

imageData[pixelindex] = red; // Red

imageData[pixelindex+1] = green; // Green

imageData[pixelindex+2] = blue; // Blue

imageData[pixelindex+3] = 255; // Alpha

}

}

return imageData;

};

let data = makeImageData(0);

let plotId = vivid.png({type: 'png', data, width:28, height:28, title:'test2'});

await vivid.show({plotId});

})();

LoggerMixins

This Mixins class provides attributes: Logger.

import { LoggerMixins, termLogger, BaseLogger } from 'causal-net.log';

import { platform } from 'causal-net.utils';

import { Tensor } from 'causal-net.core';

class SimplePipeline extends platform.mixWith(Tensor, [LoggerMixins]){

constructor(){

super();

this.Logger = termLogger;

}

}

let pipeline = new SimplePipeline();

console.log(pipeline.Logger instanceof BaseLogger);

causal-net.preprocessing

This module provide standard preprocessing instances for image/text data and preprocessing mixins for pipeline

nlpPreprocessing

Provide methods for text processing: tokenize, filter, count word frequency.

imagePreprocessing

Provide method for image processing: split, transform color

PreprocessingMixins

Mixins for mix with Pipeline class or dataset class.

causal-net.representation

This module provides:

CausalNetEmbedding

This class provide standard implements for text to vecs transformation. Which can be accessed via causalNetEmbedding

Node

import { causalNetEmbedding } from 'causal-net.representation';

import { termLogger } from 'causal-net.log';

(async ()=>{

const configLink = '../../datasets/WordVec_EN/';

await causalNetEmbedding.connect(configLink, true);

//first time transform will find on storage cache

let vecs = await causalNetEmbedding.transform(['this', 'is', 'test']);

for(let vec of vecs){

termLogger.log({ vec });

}

//second time transform will find on memory cache

vecs = await causalNetEmbedding.transform(['this', 'is', 'test']);

for(let vec of vecs){

termLogger.log({ vec });

}

//return the tensor representing sentence

let sentVec = await causalNetEmbedding.sentenceEncode([ ['this', 'is', 'test'] ]);

sentVec.print();

})().catch(err=>{

console.error(err);

});

UniversalEmbedding

This class provide standard implements for text to vecs transformation into single vector based on use which can be accesed via universalEmbedding

import { universalEmbedding } from 'causal-net.representation';

import { termLogger } from 'causal-net.log';

import { tokenizer } from 'causal-net.preprocessing';

(async ()=>{

const BaseModelServer = 'http://0.0.0.0:8080/models/';

termLogger.groupBegin('load model');

await tokenizer.connect(BaseModelServer + 'use/vocab.json');

await universalEmbedding.connect(BaseModelServer + '/use/tensorflowjs_model.json');

termLogger.log('load finish');

const asEncode = true;

let tokens = [tokenizer.tokenize('dog', asEncode),

tokenizer.tokenize('cat', asEncode)];

termLogger.log({tokens});

let sentVec = await universalEmbedding.sentenceEncode(tokens);

sentVec.print();

let score = await universalEmbedding.encodeMatching(tokens[0], tokens[1]);

score.print();

termLogger.groupEnd();

})().catch(console.err);

RepresentationMixins

This mixin class provides attributes: Prepresentation.

import { RepresentationMixins, causalNetEmbedding } from 'causal-net.representation';

import { platform } from 'causal-net.utils';

import { Tensor } from 'causal-net.core';

const PipeLineConfigure = {

Representation: {

Embedding: causalNetEmbedding,

EmbeddingConfig: '../../datasets/WordVec_EN/',

}

}

class SimplePipeline extends platform.mixWith(Tensor, [RepresentationMixins]){

constructor(configure){

super();

this.setRepresentationByConfig(configure);

}

}

let pipeline = new SimplePipeline(PipeLineConfigure);

pipeline.connect();

console.log(pipeline.Representation);

causal-net.sampling

This causal-net.sampling is a sub-module for causality project. This module provide sampling instance and sampling mixins

CausalNetSampling

This class provide common used sampling methods which can be accessed via causalNetSampling instance.

import { causalNetSampling } from 'causal-net.sampling';

import {termLogger as Logger} from 'causal-net.log';

let numSamples = 4;

let idSize = 10;//id list: [0,1,2,3,4,5,6,7,8,9]

Logger.log(causalNetSampling.subSampling(numSamples, idSize));

numSamples = 4;

let positiveSampleId = [0, 1];

//ids: [0, 1, 2, 3];

let probIds = [0.9, 0.9, 0.3, 0.7];

let samples = causalNetSampling.negSampling(numSamples, positiveSampleId, probIds);

termLogger.log({ samples });

SamplingMixins

This mixin class provide attributes: Sampling.

import { SamplingMixins, causalNetSampling } from 'causal-net.sampling';

import { Platform } from 'causal-net.utils';

import { Tensor, Function } from 'causal-net.core';

console.log(causalNetSampling instanceof Function);

class SimplePipeline extends Platform.mixWith(Tensor, [SamplingMixins]){

constructor(){

super();

this.Sampling = causalNetSampling;

}

}

let pipeline = new SimplePipeline();

console.log(pipeline.Sampling);

causal-net.models

CausalNetModels

This class provides common used models which can be accessed via causalNetModels instance.

- Classification models

import { SingleLabelClassification } from 'causal-net.models';

import { causalNetCore } from 'causal-net.core';

let model = new SingleLabelClassification(2);

let T = causalNetCore.CoreTensor;

let inputs = T.tensor([[0.1, 0.2]], [1, 2], 'float32');

let labels = T.tensor([[0, 1]], [1, 2], 'float32');

model.LayerRunner = { Predictor: (input)=>input};

model.Fit(inputs).print();

model.Loss(inputs, labels).print();

model.Predict(inputs).print();

model.OneHotPredict(inputs).print();

ModelMixins

This mixin class provides attributes: Model, LossModel, FitModel, OneHotPredictModel, PredictModel and handle Model setting of pipelineConfig.Net.

import { causalNetModels, ModelMixins } from 'causal-net.models';

import { causalNetParameters, causalNetLayers, causalNetRunner, LayerRunnerMixins } from 'causal-net.layer';

import { causalNetCore } from 'causal-net.core';

import { platform } from 'causal-net.utils';

import { Tensor } from 'causal-net.core';

import { termLogger, LoggerMixins } from 'causal-net.log';

class SimplePipeline extends platform.mixWith(Tensor, [LayerRunnerMixins, ModelMixins, LoggerMixins]){

constructor(netRunner, logger){

super();

this.Logger = logger;

this.LayerRunner = netRunner;

}

}

const T = causalNetCore.CoreTensor;

(async ()=>{

let convLayer = causalNetLayers.convolution({kernelSize:[2,2], filters:[1,2], flatten:true} );

let denseLayer = causalNetLayers.dense({inputSize:24,outputSize:2});

const PipeLineConfigure = {

Net: {

Parameters: causalNetParameters.InitParameters(),

Layers: {

Predict: [ convLayer, denseLayer],

Encode: [ causalNetLayers.dense({inputSize:24,outputSize:2}) ],

Decode: [ causalNetLayers.dense({inputSize:24,outputSize:2}) ]

},

Model: causalNetModels.classification(2)

}

};

let pipeline = new SimplePipeline( causalNetRunner, termLogger);

pipeline.setByConfig(PipeLineConfigure);

let inputTensor = T.tensor([ [1,2,3,4],

[1,2,3,4],

[1,2,3,4] ]).reshape([1,3,4,1]);

let modelOneHotPredict = pipeline.OneHotPredictModel(inputTensor);

modelOneHotPredict.print();

let fit = pipeline.FitModel(inputTensor);

fit.print();

let modelLoss = pipeline.LossModel(inputTensor,

T.tensor([[0, 1]]).asType('float32'));

modelLoss.print();

})().catch(err=>{

console.error({err});

});

causal-net.optimizers

This causal-net.optimizer provides:

CausalNetSGDOptimizer

This class provides optimizing methods which can be accessed via causalNetSGDOptimizer instance.

import { causalNetCore } from "causal-net.core";

import { causalNetSGDOptimizer } from 'causal-net.optimizers';

var adam = causalNetSGDOptimizer.adam({learningRate: 0.01});

const T = causalNetCore.CoreTensor;

var a = T.variable(T.tensor([1,2,3,4]).reshape([2,2]));

var b = T.tensor([2,3,4,5]).reshape([2,2]);

const FitFn = ()=>{

return a.mul(b).mean();

};

console.log( adam.fit(FitFn) );

a.print();

b.print();

TrainerMixins

This mixin class provides attributes: Optimizer, Trainer, TrainDataGenerator, methods train, handle Optimizer setting of pipelineConfig.Net and TrainDataGenerator setting of pipelineConfig.Dataset.

import { causalNetSGDOptimizer, TrainerMixins, EvaluatorMixins } from 'causal-net.optimizers';

import { causalNetModels, ModelMixins } from 'causal-net.models';

import { causalNetParameters, causalNetLayers, causalNetRunner, LayerRunnerMixins } from 'causal-net.layer';

import { causalNetCore, Functor } from 'causal-net.core';

import { platform } from 'causal-net.utils';

import { Tensor } from 'causal-net.core';

import { termLogger, LoggerMixins } from 'causal-net.log';

class SimplePipeline extends platform.mixWith(Tensor, [

LayerRunnerMixins,

ModelMixins,

EvaluatorMixins,

LoggerMixins,

TrainerMixins]){

constructor( netRunner, functor, logger){

super();

this.F = functor;

this.LayerRunner = netRunner;

this.Logger = logger;

}

}

const T = causalNetCore.CoreTensor;

const R = causalNetCore.CoreFunctor;

const F = new Functor();

const DummyData = (batchSize)=>{

let samples = [ [[0], [1], [2], [3]],

[[0], [1], [2], [3]],

[[0], [1], [2], [3]] ];

let labels = [ [0,1] ];

return [{samples, labels}];

}

console.log(DummyData(1));

(async ()=>{

let convLayer = causalNetLayers.convolution({ kernelSize: [2, 2],

filters: [1, 2],

flatten: true } );

let denseLayer = causalNetLayers.dense({ inputSize: 8, outputSize: 2 });

const PipeLineConfigure = {

Dataset: {

TrainDataGenerator: DummyData,

TestDataGenerator: DummyData,

},

Net: {

Parameters: causalNetParameters.InitParameters(),

Layers: { Predict: [ convLayer, denseLayer ] },

Model: causalNetModels.classification(2),

Optimizer: causalNetSGDOptimizer.adam({learningRate: 0.01})

}

};

let pipeline = new SimplePipeline( causalNetRunner, F, termLogger);

pipeline.setByConfig(PipeLineConfigure);

const NumEpochs = 10, BatchSize = 1;

console.log(await pipeline.train(NumEpochs, BatchSize));

console.log(await pipeline.test());

})();

EvaluatorMixins

This mixin class provides methods: test and handle TestDataGenerator setting of pipelineConfig.Dataset.

causal-net.memcache

memDownCache

This class a implementation for memory caching on top of memdown which can be accessed via memDownCache.

import {memDownCache} from 'causal-net.memcache';

import {termLogger} from 'causal-net.log';

(async ()=>{

await memDownCache.setItem(123, '1223adfa');

termLogger.log({getItem: await memDownCache.getItem(123)});

})();

MemCacheMixins

This mixins class provides attributes: MemCache.

import {memDownCache, MemCacheMixins} from 'causal-net.memcache';

import {termLogger} from 'causal-net.log';

import { platform } from 'causal-net.utils';

import { Tensor, Store } from 'causal-net.core';

class SimplePipeline extends platform.mixWith(Tensor, [MemCacheMixins]){

constructor(){

super();

this.MemCache = memDownCache;

}

}

let pipeline = new SimplePipeline();

termLogger.log(pipeline.MemCache instanceof Store);

causal-net.storage

This module provides:

indexDBStorage

The isomorphic high performance key-value storage based on indexDB.

import { indexDBStorage } from 'causal-net.storage';

(async ()=>{

await indexDBStorage.writeFile('/temp','12345');

let content = await indexDBStorage.readFile('/temp');

console.log({content});

//get file list

let listFiles = await indexDBStorage.getFileList('/');

console.log({listFiles});

//fetch png image and save pixel data into file

const url = 'https://avatars3.githubusercontent.com/u/43268620?s=200&v=4';

await indexDBStorage.fetchPNGFile(url, 'icon');

const pixelArray = await indexDBStorage.readPNGFile('icon');

console.log({ pixelArray });

let ops = [

{ type: 'put', key: 'temp', value: '123445' },

{ type: 'del', key: 'temp' }];

//batch does not support 'get' type

let batchResult = await indexDBStorage.batch(ops);

console.log({batchResult});

})().catch(err=>{

console.error(err);

});

StorageMixins

This mixins class provides Storage attribute.

import { StorageMixins, indexDBStorage } from 'causal-net.storage';

import { platform } from 'causal-net.utils';

import { Tensor, Store } from 'causal-net.core';

class SimplePipeline extends platform.mixWith(Tensor, [StorageMixins]){

constructor(storage){

super();

this.Storage = storage;

}

}

let pipeline = new SimplePipeline(indexDBStorage);

console.log(pipeline.Storage instanceof Store);

causal-net.utils

This module provides:

Platform

This class provides enhanced isomorphic mixins with corresponding platform (node|web) which can be access via platform.

import { assert } from 'causal-net.utils';

assert.seemMatchSample([2,2,3], [1,2,3], 'validate sample');

assert.seemMatchSample('sample text', 'pattern text', 'validate sample');

assert.seemMatchSample( { 'text' : 'pattern text 1', 'number' : 1123 },

{ 'text' : 'pattern text', 'number' : 1123 } , 'validate sample');

try{

assert.seemMatchSample(['2',2,3], [1,2,3], 'validate sample');

}

catch(err){

//error due to mismatch schema

console.log(err.message);

};

class A{};

let a = new A();

assert.beInstanceOf(a, A);

try{

assert.beInstanceOf('1', A);

}

catch(err){

console.log(err.message);

}

Fetch

This class provides isomorphic fetch which can be accessed via fetch.

import {fetch, Stream, PNGUtils} from 'causal-net.utils';

(async ()=>{

let link = 'https://avatars3.githubusercontent.com/u/43268620?s=200&v=4';

let content = await fetch.fetchData(link);

console.log({'content length': content.length});

});

PNG

This class provides isomorphic PNG parser which can be accessed via pngUtils.

Web/Node:

import { pngUtils } from 'causal-net.utils';

(async ()=>{

const link = 'https://avatars3.githubusercontent.com/u/43268620?s=200&v=4';

let fetchedData = await pngUtils.fetchPNG(link);

console.log(fetchedData.length);

})();

Node:

import { pngUtils } from 'causal-net.utils';

(async ()=>{

let data = await pngUtils.readPNG('../../datasets/icon.png');

console.log(data.length);

pngUtils.writePNG(data, [200, 200, 4], './out.png');

})();

CSV

This class provides isomorphic CSV parser which can be accessed via csvUtils.

Node:

import { csvUtils } from 'causal-net.utils';

(async ()=>{

let data = await csvUtils.readCSV('./credict.csv');

console.log(data);

let headers = Object.keys(data[0]);

await csvUtils.writeCSV(headers, data, './output.csv');

data = await csvUtils.readCSV('./output.csv');

console.log(data);

// console.log(await csvUtils.chunkCSV('./output.csv',3,'./chunk-{}.csv'));

// const csvlink = 'https://media.githubusercontent.com/media/red-gold/causality/master/datasets/credict.csv';

// data = await csvUtils.fetchCSV(csvlink);

// console.log(data);

// data = [{'a':'a','text':'"this is text\n,;"'}];

// await csvUtils.writeCSV(['a','text'], data, './output.csv');

// console.log(await csvUtils.readCSV('./output.csv'));

})();

Stream

This class provides isomorphic Stream with Readable, Writeable, Duplex which can be accessed via stream.

import { stream } from 'causal-net.utils';

let reader = stream.makeReadable();

const TranformFn = (chunkData, chunkEncoding, afterTransformFn) =>{

chunkData.x = (chunkData.x+1.5);

let event = null;

afterTransformFn(event, chunkData);

};

let transformer = stream.makeTransform(TranformFn);

const WriteFn = (chunkData, chunkEncoding, callback) =>{

console.log({chunkData});

callback();

};

let writer = stream.makeWritable(WriteFn);

reader.pipe(transformer).pipe(writer);

//write random int for every 100 ms

setInterval(() => {

reader.push({ x: Math.random() });

}, 100);

Assert

This class provides enhanced isomorphic assert with schema learnt from example which can be accessed via assert.

import { assert } from 'causal-net.utils';

assert.seemMatchSample([2,2,3], [1,2,3], 'validate sample');

assert.seemMatchSample('sample text', 'pattern text', 'validate sample');

assert.seemMatchSample( { 'text' : 'pattern text 1', 'number' : 1123 },

{ 'text' : 'pattern text', 'number' : 1123 } , 'validate sample');

try{

assert.seemMatchSample(['2',2,3], [1,2,3], 'validate sample');

}

catch(err){

//error due to mismatch schema

console.log(err.message);

};

class A{};

let a = new A();

assert.beInstanceOf(a, A);

try{

assert.beInstanceOf('1', A);

}

catch(err){

console.log(err.message);

}

Tutorials

Stream processing with text8 data

Input raw text8 corpus file and return the occurent number of each tokens in corpus.

import * as Preprocessing from 'causal-net.preprocessing';

import * as Log from 'causal-net.log';

import * as Utils from 'causal-net.utils';

import * as Storage from 'causal-net.storage';

import * as fs from 'fs';

var { indexDBStorage } = Storage;

var { stream } = Utils;

var { termLogger } = Log;

var { nlpPreprocessing, tokenizerEN } = Preprocessing;

'use strict'

create stream process

- read chunks from file.

- transform each chunk.

- write transformed chunk into new files.

var remainingChars = '', wordFreqCount = {}, lineIndex = 0;

function tranformFn(chunkData, chunkEncoding, afterTransformFn){

let sampleText = chunkData + remainingChars;

let sampleLines = sampleText.split('\n');

let transformedData = [];

for(let line of sampleLines){

let tokens = tokenizerEN.tokenize(line);

wordFreqCount = nlpPreprocessing.wordFreqCount(tokens, wordFreqCount);

lineIndex += 1;

transformedData.push({lineIndex, tokens});

}

afterTransformFn(null, transformedData);

};

var transformer = stream.makeTransform(tranformFn);

function writeTokens(transformedData, chunkEncoding, afterWriteFn){

const WriteTokensToFile = async (transformedData)=>{

for(let {lineIndex, tokens} of transformedData){

// console.log({lineIndex});

await indexDBStorage.writeFile(`/corpus/line_${lineIndex}`, JSON.stringify(tokens));

}

}

WriteTokensToFile(transformedData).then(()=>{

afterWriteFn();

})

}

var writer = stream.makeWritable(writeTokens);

var characterCount = 0;

(async ()=>{

var corpusReader = fs.createReadStream('../datasets/text8/text8.txt');

const CorpusStreamer = stream.makePipeline([corpusReader, transformer, writer], (data)=>{

characterCount += data.length;

});

termLogger.groupBegin('stream performance');

let result = await CorpusStreamer;

termLogger.groupEnd()

termLogger.log({ result, characterCount } );

})();

stream performance: begin at Fri Mar 15 2019 16:42:45 GMT+0700 (Indochina Time)

stream performance: end after 8514 (ms)

{ result: 'Success', characterCount: 100000000 }

termLogger.log({'show 100 items': Object.entries(wordFreqCount).slice(0,100)});

{ 'show 100 items':

[ [ 'anarchism', 303 ],

[ 'originated', 572 ],

[ 'as', 131819 ],

[ 'a', 325895 ],

[ 'term', 7220 ],

[ 'of', 593676 ],

[ 'abuse', 563 ],

[ 'first', 28809 ],

[ 'used', 22736 ],

[ 'against', 8431 ],

[ 'early', 10172 ],

[ 'working', 2270 ],

[ 'class', 3412 ],

[ 'radicals', 116 ],

[ 'including', 9630 ],

[ 'the', 1061363 ],

[ 'diggers', 25 ],

[ 'english', 11868 ],

[ 'revolution', 2029 ],

[ 'and', 416615 ],

[ 'sans', 68 ],

[ 'culottes', 6 ],

[ 'french', 8736 ],

[ 'whilst', 481 ],

[ 'is', 183158 ],

[ 'still', 7378 ],

[ 'in', 372203 ],

[ 'pejorative', 114 ],

[ 'way', 6432 ],

[ 'to', 316375 ],

[ 'describe', 1352 ],

[ 'any', 11804 ],

[ 'act', 3502 ],

[ 'that', 109508 ],

[ 'violent', 653 ],

[ 'means', 4165 ],

[ 'destroy', 466 ],

[ 'organization', 2374 ],

[ 'society', 4067 ],

[ 'it', 73335 ],

[ 'has', 37865 ],

[ 'also', 44358 ],

[ 'been', 25381 ],

[ 'taken', 3043 ],

[ 'up', 12446 ],

[ 'positive', 1254 ],

[ 'label', 646 ],

[ 'by', 111829 ],

[ 'self', 2879 ],

[ 'defined', 2449 ],

[ 'anarchists', 203 ],

[ 'word', 5678 ],

[ 'derived', 1701 ],

[ 'from', 72865 ],

[ 'greek', 4577 ],

[ 'without', 5660 ],

[ 'archons', 10 ],

[ 'ruler', 617 ],

[ 'chief', 2130 ],

[ 'king', 7457 ],

[ 'political', 6967 ],

[ 'philosophy', 2758 ],

[ 'belief', 1572 ],

[ 'rulers', 687 ],

[ 'are', 76523 ],

[ 'unnecessary', 146 ],

[ 'should', 5113 ],

[ 'be', 61283 ],

[ 'abolished', 399 ],

[ 'although', 9286 ],

[ 'there', 22706 ],

[ 'differing', 231 ],

[ 'interpretations', 395 ],

[ 'what', 8581 ],

[ 'this', 58827 ],

[ 'refers', 1570 ],

[ 'related', 3535 ],

[ 'social', 4307 ],

[ 'movements', 1002 ],

[ 'advocate', 331 ],

[ 'elimination', 216 ],

[ 'authoritarian', 185 ],

[ 'institutions', 1021 ],

[ 'particularly', 2881 ],

[ 'state', 12905 ],

[ 'anarchy', 109 ],

[ 'most', 25562 ],

[ 'use', 14011 ],

[ 'does', 5220 ],

[ 'not', 44030 ],

[ 'imply', 257 ],

[ 'chaos', 331 ],

[ 'nihilism', 42 ],

[ 'or', 68948 ],

[ 'anomie', 7 ],

[ 'but', 35356 ],

[ 'rather', 4605 ],

[ 'harmonious', 28 ],

[ 'anti', 3103 ],

[ 'place', 5345 ] ] }

After preprocessing, data is saved into files under /copus/ folder

(async ()=>{

termLogger.groupBegin('get list of preprocessing files')

let listFiles = await indexDBStorage.getFileList('/corpus/');

termLogger.groupEnd()

termLogger.groupBegin('read one file from indexDB')

let tokens = await indexDBStorage.readFile(listFiles[0]);

termLogger.groupEnd()

termLogger.log([ listFiles.length , JSON.parse(tokens).length]);

})()

get list of preprocessing files: begin at Fri Mar 15 2019 16:42:56 GMT+0700 (Indochina Time)

get list of preprocessing files: end after 194 (ms)

read one file from indexDB: begin at Fri Mar 15 2019 16:42:56 GMT+0700 (Indochina Time)

read one file from indexDB: end after 0 (ms)

[ 3228, 1293 ]